Commentary: The key to regaining trust in the era of AI

Published in Op Eds

Your grandmother trusted her doctor. Your mother trusted Consumer Reports. You trust the 4.7-star rating from 2,300 strangers.

But if you’ve recently used the internet to look at reviews for a stroller, or even for help selecting a health insurance plan, you likely noticed that it’s not very helpful anymore. Real information has become buried by an avalanche of artificial intelligence-produced fakery.

Today’s internet is less authentic. And less human. If you rely on it for information about anything from polo shirts to politics, that’s a big problem. The good news might just be that in an age when AI can manufacture those strangers — and their enthusiasm — overnight, people may start trusting institutions and experts again.

Partly due to the influence of the internet, we’ve seen a rejection of institutional credibility and the value of expertise in favor of the distributed judgment of everyday users. Generative AI’s infinite stream of digitally produced nonsense is making that an increasingly bad bet.

A Graphite study of 65,000 English-language web articles found that before ChatGPT, about 5% of those were primarily AI-generated. By late 2024 that figure crossed 50%. In April 2025, Ahrefs found that 74% of newly created web pages contained AI-generated content. This isn’t a content quality problem — it’s a cost collapse. Producing plausible text used to need a person with time and at least some effort. Now it requires a prompt.

Instead of being useful, many AI-written articles are lies. The issue isn’t AI hallucinations but rather generative AI’s ability to produce plausible fiction cheaply and at scale. The consequence is an endless flood of AI-created nonsense on every topic where propagating falsehoods is in someone’s interest, from Amazon product reviews to global politics.

The natural response is to be skeptical, do your own research, triangulate sources, etc. That can work if you already have expertise on the question you’re trying to answer. It falls apart for everything else. When you’re buying a stroller, you can’t crash-test every model. You rely on reviewers who are real, hopefully aren’t selling you something, and seem to have good judgment. There’s a name for that arrangement: delegated trust.

For a while, the internet’s distributed version of delegated trust — Amazon reviews, TikTok influencers — looked like it could replace institutional authority. And why not? Institutions fail all the time. They get captured, go lazy, and protect their own interests. But when anyone can generate infinite flimflam, relying on unfiltered views means listening to whoever has most aggressively deployed fakery to drown the truth in synthetic blather.

And it gets worse. Decades of psychology research have shown that simple repetition makes claims feel true. Research demonstrated in 2015 that this “illusory truth effect” persists even when people know a claim is false. When AI enables cheap, massive repetition of plausible narratives, familiarity substitutes for credibility. That is not a problem anyone can think their way out of.

But quite often you have to trust someone. There are just too many decisions in life — from what to buy to where to invest to who to vote for — where people, no matter how capable, need guidance.

So here’s my prediction: As AI-generated content overwhelms the open web, trust will recentralize. People will fall back on recognizable institutions, named authors, and visible editorial processes. They’ll also fall back on individuals with established track records, whether a named journalist or a parent influencer who tests strollers with her own kids on real sidewalks and in actual airports.

The key in both cases is authenticity. You have confidence that a real person is providing truthful, trustworthy information. The reviewer you watch on Instagram is almost certainly real…today. But even that will fade as AI-generated video improves.

Institutions can fill the gap. You might disagree with this column, but you can be sure that I am a real person, because Bloomberg says I am. Someone at the organization has met me, checked my credentials, and would be fired if I turned out to be ChatGPT-5.2 in a blazer. That’s not much, but it’s more than most of what is published today.

Organizations who have built that kind of verification infrastructure are starting to see returns. The New York Times bought Wirecutter for $30 million in 2016, and it’s now a key driver of the media firm’s subscription bundle, and the broader category it sits in — affiliate, licensing, and other revenue — reached $70.5 million in the second quarter of 2025. Wikipedia’s army of volunteer editors — real people enforcing sourcing standards — has made it one of the most trusted sites on the internet for the same reason: visible, human curation.

Institutions that assume public distrust is permanent will underinvest in credibility. That’s a strategic mistake. Credibility compounds slowly. It takes years of editorial standards, sourcing discipline, and accountability before people treat you as a default. The institutions that will benefit from the coming recentralization of trust are the ones building that infrastructure now, before the market demands it.

The question isn’t whether trust will recentralize; it’s which institutions will be ready when it does.

_____

This column reflects the personal views of the author and does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

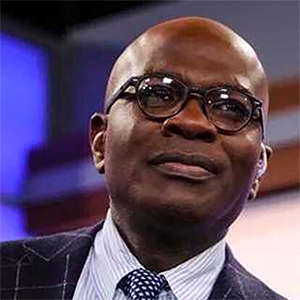

Gautam Mukunda writes about corporate management and innovation. He teaches leadership at the Yale School of Management and is the author of "Indispensable: When Leaders Really Matter."

©2026 Bloomberg L.P. Visit bloomberg.com/opinion. Distributed by Tribune Content Agency, LLC.

Comments