Commentary: Are we prepared for a world where AI isn't at work?

Published in Op Eds

Draft an important email without using AI. Write it from scratch — no suggestions, no autocomplete, and no prompt to ChatGPT to compose or revise the email.

Now ask yourself: Did it feel slower? Harder? Slightly uncomfortable?

For many of us, AI tools have quietly become part of how we think at work. And that raises a question we are not asking often enough: What happens if those tools aren’t there?

Nearly half of employees report using AI tools at work, despite explicit bans by their employers, according to a recent survey by security company Anagram: a striking reminder that organizational policies and technological practice are already out of sync. When policies lag behind practice, or tools spread informally, it raises a deeper question about AI’s role in work — now and in the future.

For the past two years, the future of work has been told as a single story: AI is here, it is transformative, and workplaces must adapt or fall behind. Companies are reorienting and reorganizing teams around it. Job descriptions now quietly assume fluency with generative tools. Productivity expectations are rising accordingly.

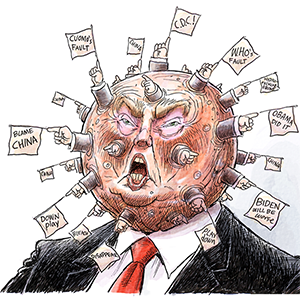

Yet even as AI becomes embedded in everyday work, permanent access to these tools is far from guaranteed. That uncertainty is already surfacing at the policy level. In a recent move, the Trump administration ordered government agencies to stop using AI systems from the company Anthropic amid disputes over how such technologies should be used in federal operations. The episode highlights how access to AI tools can quickly become entangled in legal, political and institutional battles. To comply with federal directives, other organizations may soon follow suit.

But here’s a question almost no one is asking: What if AI isn’t always there?

What if AI becomes too expensive? Too legally risky? Too privacy-invasive? What if regulations tighten? What if a major data breach or liability case forces organizations to scale back? What if public institutions, schools, health care systems or government agencies prohibit it?

We are spending enormous energy preparing for an AI-augmented future. We are spending almost none preparing for the possibility that AI might not be reliably embedded in our workplaces.

History suggests that technological change rarely follows a straight, upward line.

Remote work after COVID is an example. At one point, fully remote work seemed like the inevitable future. Yet as organizations experimented, many converged on hybrid models that balanced flexibility with coordination and culture.

AI may follow a similar path. Today’s dominant narrative assumes continuous integration and growth with AI. But rising costs, sustainability concerns, legal uncertainty and public debate could slow or redirect this trajectory. Organizations may overcorrect after incidents, restrict use, and access may become uneven.

Recent lawsuits underscore that risk. Amazon has faced legal action over allegations that workplace monitoring tools intruded on worker privacy— highlighting how digital oversight technologies can quickly become flashpoints for legal and ethical scrutiny. If AI-driven systems blur the line between productivity support and surveillance, regulatory and reputational consequences could reshape how widely they are deployed.

There is another issue lurking beneath the surface: de-skilling.

AI tools are increasingly capable of drafting emails, summarizing documents, generating code, synthesizing research and even recommending decisions. They feel like frictionless assistants. And they are genuinely useful.

But decades ago, researchers studying automation warned of the “ironies of automation.” When systems fail, humans are suddenly expected to intervene — without having maintained the underlying expertise.

Our recent research identified this AI-as-Amplifier Paradox: AI’s dual role as an enhancer and an eroder, simultaneously strengthening performance while eroding underlying expertise. We found how we may be entering an era of gradual, almost invisible deskilling. In other words, AI may boost performance in the moment, but may quietly weaken the skills underneath. We are getting better at using AI at work, while losing the muscle memory of critical thinking and independent reasoning. This is an asymptomatic AI harm. It does not announce itself. We will likely only realize it when the AI is not available.

If AI disappeared tomorrow, how many organizations could still perform at the same level of rigor and judgment?

To be sure, AI offers real and measurable benefits. The question is whether we are building ecosystems that depend on it — or ecosystems that remain resilient without it.

Public debate often frames the future in extremes: Either we embrace AI everywhere, or we resist it. But that binary misses a more plausible middle path.

Instead of asking whether work should be AI-augmented or AI-free, we should be asking whether it is AI-resilient.

An AI-resilient workplace thrives with AI but does not collapse without it. AI enhances work, but does not become a single point of failure. Human expertise is cultivated continuously, not quietly outsourced. Organizations can scale back AI without destabilizing workflows.

This is not anti-technology. It is an institutional and societal responsibility.

There is also an equity dimension. Access to AI is not evenly distributed. Professional licenses are expensive, and costs are already trending upward, while free tiers are becoming more limited — or disappearing altogether. Privacy-sensitive industries restrict usage. Public institutions face compliance barriers. Some organizations ban AI outright. If productivity standards quietly assume AI assistance, but access is uneven, we risk creating a new fault line: workers who are AI-augmented and workers who are not.

AI tools could become a form of workplace privilege rather than shared infrastructure.

If we design our workplaces under the assumption that AI will always be cheap, accessible, and legally uncomplicated, we risk building brittle systems — systems that require constant AI support to function.

We need to rethink building workplaces that can thrive with AI — and continue functioning without it.

____

Dr. Koustuv Saha is an Assistant Professor of Computer Science at the University of Illinois Urbana-Champaign’s (UIUC) Siebel School of Computing and Data Science and is a Public Voices Fellow of The OpEd Project. He studies how online technologies and AI shape and reveal human behaviors and wellbeing.

©2026 The Fulcrum. Visit at thefulcrum.us. Distributed by Tribune Content Agency, LLC.

Comments